There is a quiet conversation happening inside boardrooms right now, and it sounds like this: “We have AI covered. The team is using ChatGPT.”

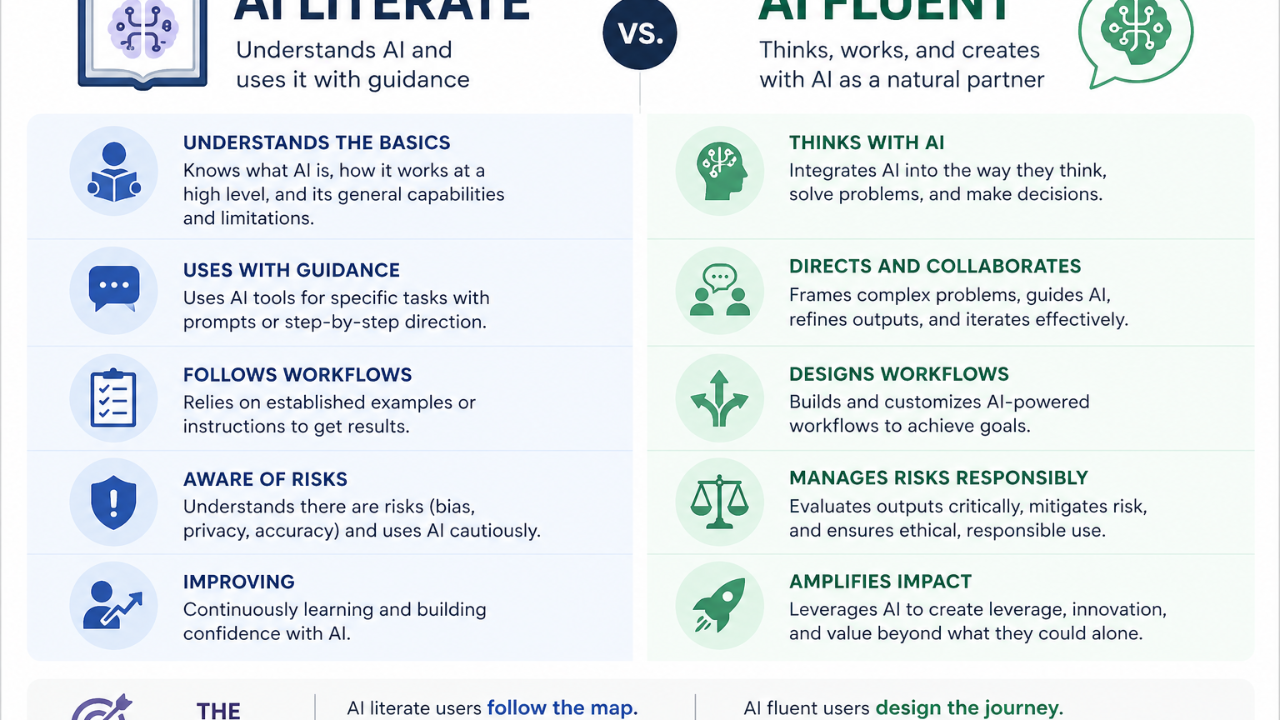

That is not AI coverage. That is AI literacy. And literacy is no longer the finish line. It is the floor.

In February 2026, the US Department of Labor published an AI literacy framework that defined it plainly: a foundational level of knowledge and skill that all workers should have as AI becomes embedded across the economy. Foundational. Baseline. Not advanced. The framework explicitly acknowledges that “many roles will require more advanced capabilities beyond this foundational level, such as managing and building AI systems.” [1]

Translation for the C-suite: typing a prompt into a chatbot is now table stakes. It is the equivalent of being able to open Excel. Your team is not differentiated. Your company is not differentiated. You are not differentiated.

Fluency is the next tier, and most executives have no map for it.

The Framework Educators Have Been Using For Years

The distinction between literacy and fluency is not something I invented. Educators, including a team at Penn State whose work appeared in EDUCAUSE Review in 2019, have been teaching an arc that runs from competent to literate to fluent. Competent means you can operate the tool. Literate means you understand the context around it: its implications and its risks. Fluent means you can use the tool to create something new, transfer your skills across tools, and make nuanced judgments about when and how to deploy them. [2]

Language learning uses the same distinction. A literate person can read and understand. A fluent person can compose. A poem, a conversation, an argument, a joke. Fluency is generative. [3]

Apply that to AI.

A literate executive can ask ChatGPT to summarize a memo. A fluent executive can string together four tools, deploy an agent to do the research, route the output through a second model to critique it, hand the result to a third to format it, and trust her own judgment on when each of those steps needs human review. She can also tell you why she made each of those choices, what the tradeoffs are, and what she would do differently if the budget were cut in half.

One of those executives gets the same answer a college intern could pull up. The other is running a system.

Why the Confusion Is So Expensive

McKinsey’s 2026 State of AI research reports that 88 percent of companies now use AI in some form, but only 6 percent are seeing clear financial returns, and only 1 percent of executives rate their own AI rollouts as mature. [4]

Six percent. One percent.

That gap between “we use AI” and “we get value from AI” is the literacy-fluency gap, showing up at organizational scale. Companies whose leaders stopped at literacy are pouring money into tools their teams use once and abandon, because no one at the top knows what a mature AI workflow actually looks like. They cannot evaluate vendors. They cannot steer their teams. They cannot tell when a pitch is snake oil and when it is a real leap. They miss the shift from chat to agents. They miss the shift from standalone tools to features absorbed inside other platforms. They keep buying the same capability three times because they do not recognize they already own it.

That is the part that costs money. And reputation. And a seat at the table three years from now.

Fluency Is Not a Technical Skill

Here is what I know from coaching executives on this, as a former tech founder who came at AI from a non-technical background and sold my company to Mattel in 2022. Pedigree is not what made me fluent. Hands-on hours did.

Fluency is not code. It is not certifications. It is not a weekend at an MIT workshop.

Fluency is hands-on hours with multiple tools, making real things, breaking them, and building the judgment to tell a tool that is ready for your team from one that is not.

The executives closing this gap fastest are not the ones reading about AI. They are the ones using it to build something they actually needed, watching it fail, fixing it, and then understanding why the fix worked. Those who wrote a prompt, got a mediocre answer, asked a second model to stress-test it, and noticed the difference. Those who built a small agent to do something annoying on their calendar and were surprised by how much it got right and where it got it wrong.

If you can run a P&L, you can get fluent. The barrier is not intelligence and it is not a technical background. The barrier is time, privacy, and the willingness to be bad at something before you are good at it. The first two are the reasons most executives stall. The last one is the reason they stay stalled.

The Question Worth Sitting With

For the executive who suspects she has been living with literacy and calling it fluency, the question is not “what did I prompt this month.”

The question is: what did I build?

Sources

[1] U.S. Department of Labor, “US Department of Labor releases AI literacy framework,” February 13, 2026.

[2] EDUCAUSE Review, “Competent, Literate, Fluent: The What and Why of Digital Initiatives,” April 2019.

[3] School Catalogue Information Service (SCIS), “Digital fluency vs. digital literacy,” Connections Issue 111.

[4] McKinsey & Company, “State of AI trust in 2026: Shifting to the agentic era,” 2026.

Disclaimer: For those of you warming up in the comments, yes, I obviously used AI to write this. That’s my whole point: the ideas are mine, drawn from a five-page free-flowing brain dump and from real conversations I’ve had with people at all ends of the AI knowledge spectrum. AI helped me organize, tighten, and get the words on the page faster than I could on my own. I have been telling you throughout this series that AI is not here to replace you, it is here to make you more efficient. This article is the proof.