There is a popular framework in adult learning called the cone of learning that claims people remember 10 percent of what they read, 20 percent of what they hear, 50 percent of what they see demonstrated, and 90 percent of what they do.

Those specific percentages are not real. They were superimposed onto Edgar Dale's original work in 1970 by an unidentified source and have been repeated ever since.

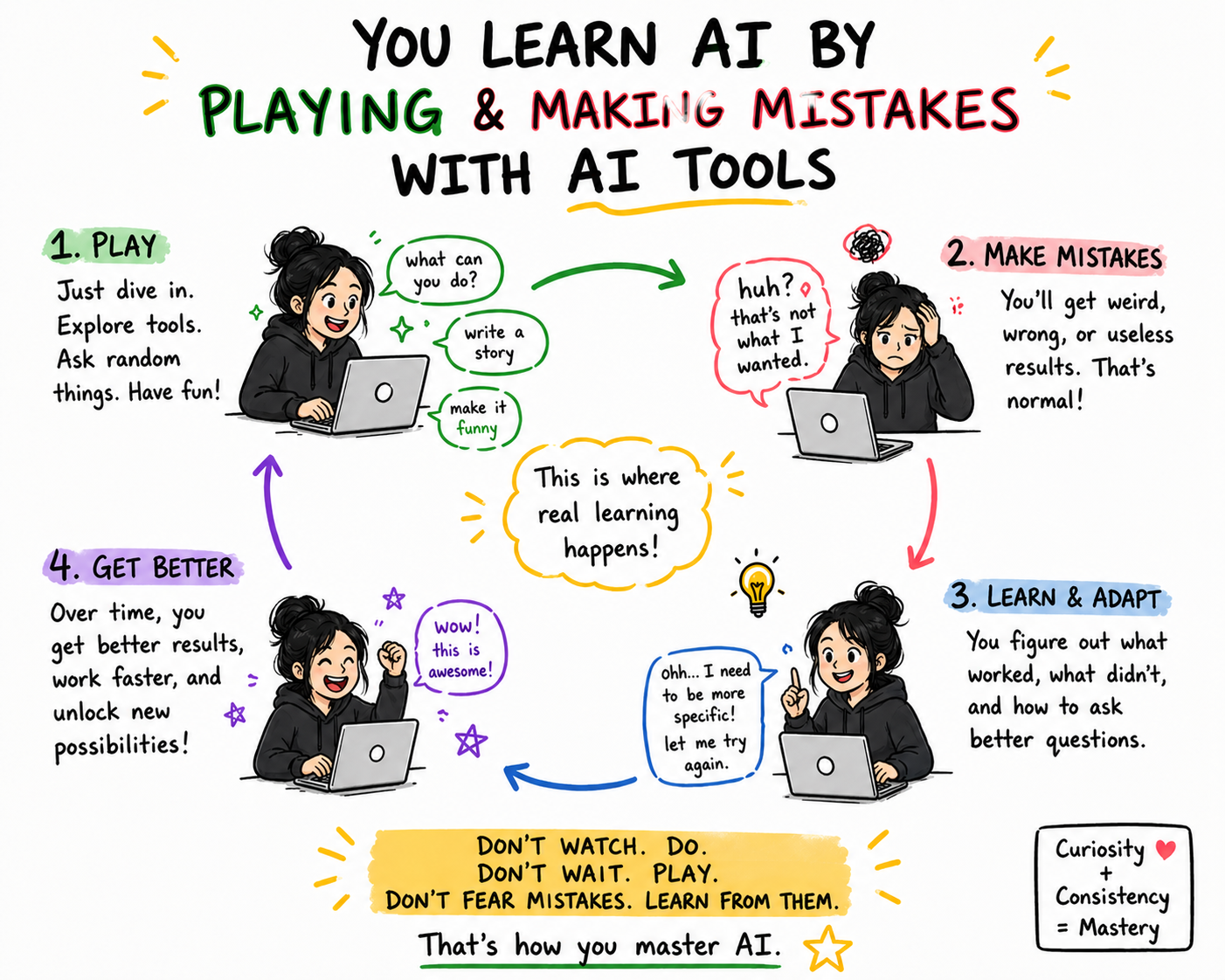

The underlying principle, however, is well-supported by research. Learning sticks when the learner is cognitively engaged with the material. Watching is the lowest engagement state. Doing, especially doing imperfectly and then having to fix what broke, is the highest.

This is the reason I stopped recommending AI demos and AI explainer videos to my coaching clients. They do not work. Not because the demos are bad, but because the format is wrong for what executives actually need to learn.

Why Demos Fail For AI Specifically

Most professional content about AI right now is built around demos. A LinkedIn post showing slick automation. A vendor pitch deck running through a flashy use case. A keynote stage with a live build.

None of those teach you AI. They teach you that someone else can do AI.

There are three reasons this gap is bigger for AI than it was for previous technology shifts.

First, AI feels intuitive in demos because the demos are curated. The person showing you the workflow has already failed at it twenty times and is now showing you the version that works. The reality of using these tools is closer to the twenty failures than the one polished result.

Second, AI has a much shorter feedback loop than software you watched in demos a decade ago. You can copy what someone did on stage, type it into your own tools, and have the answer come back wrong in the next three seconds. The disorientation is not a sign you are bad at AI. It is a sign you are now actually doing AI, which the demo skipped.

Third, AI improvements move faster than demo recordings. The exact prompt or workflow that worked in last month's demo may behave differently this month because the underlying model updated. Watching a demo is studying for a test that has already changed.

What Hands-On Actually Looks Like

The minimum viable engagement is ten minutes a day for a week.

Pick something on your calendar that you do not want to do. A weekly summary you have to write. A scheduling back-and-forth that eats your Tuesday. A formatting task you keep delegating to someone who has better things to do.

Open whichever AI tool is closest to your hand. Try to get it to do the thing.

It will fail the first time. The output will be too long. Or the wrong tone. Or it will hallucinate a fact. Notice the failure mode. Try a different prompt. Try a different tool. Add an instruction you forgot. Watch what changes.

That is the entire curriculum. Over the course of seven days, you will internalize more about how AI tools actually work than you would from watching a hundred demos.

What Changes After Seven Days of Trying

Your brain rewires around the actual capabilities of the tools, not the marketed capabilities. You stop being impressed by features that don't actually save you time. You start noticing which tools are genuinely useful for which kinds of tasks. You can sit through a vendor pitch and ask the kinds of questions a fluent executive asks, instead of nodding at the demo and looking at your phone.

You also start spotting the gaps. The places where the technology is not ready yet. The places where it overpromises. The places where it has quietly leapfrogged something you used to pay for.

None of those instincts are downloadable from an explainer video. They have to be built by hand.

The Honest Framing

Demos serve a purpose. They show you a destination. They cannot get you there.

The executives I see closing the AI gap fastest are the ones who treat the demo as the trailer, not the movie. They watch it, they get curious, and then they close the tab and go try the thing themselves with whatever messy half-real task is on their desk.

The executives who stay stuck do the opposite. They watch more demos. They forward more articles. They book more lunches with vendors. They are gathering information instead of building skill, and they assume the gathering will eventually convert. It will not.

The Question Worth Sitting With

How many AI demos have you watched this month?

How many AI tasks have you tried and failed at this month?

If the second number is smaller than the first, you are studying for a test you have not yet shown up to take.

Sources

[1] Training Industry, "Everything You Think You Know about Learning Retention Rates is Wrong."

[2] METR, "Measuring AI Ability to Complete Long Tasks," March 2025.

For those of you warming up in the comments, yes, I obviously used AI to write this. That's my whole point: the ideas are mine, drawn from a five-page free-flowing brain dump and from real conversations I've had with people at all ends of the AI knowledge spectrum. AI helped me organize, tighten, and get the words on the page faster than I could on my own. I have been telling you throughout this series that AI is not here to replace you, it is here to make you more efficient. This article is the proof.